Home Page Spotlights

A study of people in 15 countries reveals that while everyone favors rhythms with simple integer ratios, biases can vary quite a bit across societies.

Designing machines to think like humans provides insight into intelligence itself

History and future of ChatGPT and its sisters in the book just published by the Italian scientist who became famous at the Massachusetts Institute of Technology in Boston. «The genie is out of the bottle, but humans are more dangerous than robots»

Join us on a journey through the history of artificial intelligence (AI) from its early conceptual foundations to today’s Gen AI breakthroughs and tomorrow’s potential futures with Amnon Shashua and Tomaso Poggio on April 18th.

Joel Oppenheim was a wise and generous man. We were fortunate to work with him when he joined the CBMM External Advisory Committee (EAC) at its inception in 2014, and got to know him well...

More than 80 students and faculty from a dozen collaborating institutions became immersed at the intersection of computation and life sciences and forged new ties to MIT and each other.

Rodney Brooks, co-founder of iRobot, kicks off an MIT symposium on the promise and potential pitfalls of increasingly powerful AI tools like ChatGPT.

Robots that can fit multiple items into a limited space could help pack a suitcase or a rocket to Mars.

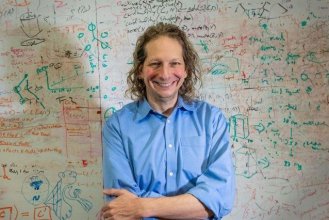

Tenenbaum is best known for theories of cognition as Bayesian inference, with a focus on explaining how humans can learn so much so quickly, from so little data.

A journalist and a pioneer of artificial intelligence talk about the dawn of a new "general" technology which, like electricity or the computer, is destined to transform society, the economy and daily life, with a load of risks and opportunities.

A sincere thank you to all speakers and attendees of 'CBMM10 - A Symposium on Intelligence: Brains, Minds, and Machines.' And an extra thank you for those who made it possible! ~Tomaso Poggio

Research on Intelligence in the Age of AI: On which critical problems should Neuroscience, Cognitive Science, and Computer Science focus now? Consider society, industry and science point of view.

Language and Thought: Is natural language the language of thought? LLMs as models of human language and thought. Are LLMs aligned with neuroscience and with human behavior? What is still missing?

Neuroscience to AI and back again: Review of progress and success stories in understanding perception in primates and replicating it in machines. Key open questions. Synergies. CNNs vs transformers as models of cortex.

Interacting with the physical world: Which aspects of human intelligence require embodiment? Motor control: was it the key for development of human intelligence? Is embodiment necessary for consciousness?