The following story contains direct information on research efforts by CBMM PIs Antonio Torralba and Joshua Tenenbaum released in a jointly authored paper - https://arxiv.org/abs/2004.09476

Music gesture artificial intelligence tool developed at the MIT-IBM Watson AI Lab uses body movements to isolate the sounds of individual instruments.

Kim Martineau | MIT Quest for Intelligence

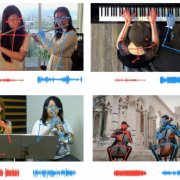

We listen to music with our ears, but also our eyes, watching with appreciation as the pianist’s fingers fly over the keys and the violinist’s bow rocks across the ridge of strings. When the ear fails to tell two instruments apart, the eye often pitches in by matching each musician’s movements to the beat of each part.

A new artificial intelligence tool developed by the MIT-IBM Watson AI Lab leverages the virtual eyes and ears of a computer to separate similar sounds that are tricky even for humans to differentiate. The tool improves on earlier iterations by matching the movements of individual musicians, via their skeletal keypoints, to the tempo of individual parts, allowing listeners to isolate a single flute or violin among multiple flutes or violins.

Potential applications for the work range from sound mixing, and turning up the volume of an instrument in a recording, to reducing the confusion that leads people to talk over one another on a video-conference calls. The work will be presented at the virtual Computer Vision Pattern Recognition conference this month.

“Body keypoints provide powerful structural information,” says the study’s lead author, Chuang Gan, an IBM researcher at the lab. “We use that here to improve the AI’s ability to listen and separate sound.”

In this project, and in others like it, the researchers have capitalized on synchronized audio-video tracks to recreate the way that humans learn. An AI system that learns through multiple sense modalities may be able to learn faster, with fewer data, and without humans having to add pesky labels to each real-world representation. “We learn from all of our senses,” says Antonio Torralba, an MIT professor and co-senior author of the study. “Multi-sensory processing is the precursor to embodied intelligence and AI systems that can perform more complicated tasks.”

...

Other authors of the CVPR music gesture study are Deng Huang and Joshua Tenenbaum at MIT.

Read the entire article on the MIT News website using the link below.