April 2, 2024 - 11:15 am

September 9 - December 13, 2024

Scientific Overview

The quest to understand intelligence is one of the great scientific endeavors—on par with quests to understand the origins of life or the foundations of the physical world. Several scientific communities have made significant progress in fields like animal cognition, cognitive science, collective intelligence, and artificial intelligence, as well as the social and behavioral sciences. Yet these...

March 26, 2024 - 4:00 pm

Singleton Auditorium (46-3002)

Giorgio Metta, Istituto Italiano di Tecnologia (IIT)

Abstract: The iCub is a humanoid robot designed to support research in embodied AI. At 104 cm tall, the iCub has the size of a five-year-old child. It can crawl on all fours, walk, and sit up to manipulate objects. Its hands have been designed to support sophisticate manipulation skills. The iCub...

March 19, 2024 - 4:00 pm

Room 45-792

The Language Mission broadly aims to understand the relationship between language and human intelligence. Scientific goals include understanding how humans and machine learning models interpret and generate language and determining the role of language in the acquisition, representation, and use of...

March 19, 2024 - 2:00 pm

Designing machines to think like humans provides insight into intelligence itself

By George Musser

he dream of artificial intelligence has never been just to make a grandmaster-beating chess engine or a chatbot that tries to break up a marriage. It has been to hold a mirror to our own intelligence, that we might understand ourselves better. Researchers seek not simply artificial intelligence but artificial general intelligence, or AGI—a system...

March 12, 2024 - 4:00 pm

Singleton Auditorium (46-3002)

Tom Griffiths, Princeton University

Abstract: Recent rapid progress in the creation of artificial intelligence (AI) systems has been driven in large part by innovations in architectures and algorithms for developing large scale artificial neural networks. As a consequence, it’s natural to ask what role abstract principles of...

March 4, 2024 - 1:15 pm

A study of people in 15 countries reveals that while everyone favors rhythms with simple integer ratios, biases can vary quite a bit across societies.

Anne Trafton | MIT News

When listening to music, the human brain appears to be biased toward hearing and producing rhythms composed of simple integer ratios — for example, a series of four beats separated by equal time intervals (forming a 1:1:1 ratio).

However, the favored ratios can vary greatly...

February 27, 2024 - 3:00 pm

McGovern Reading Room (46-5165)

Arash Afraz Ph.D., Chief of unit on neurons, circuits and behavior, laboratory of neuropsychology, NIMH, NIH

Abstract: Local perturbation of neural activity in high-level visual cortical areas alters visual perception. Quantitative characterization of these perceptual alterations holds the key to understanding the mapping between patterns of neuronal activity and elements of perception. The complexity and...

February 14, 2024 - 3:30 pm

by Jonathan Shaw

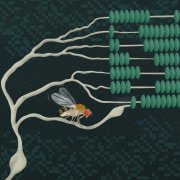

Could an artificial neural network connected to the brain of a living animal improve its performance on a task, such as the ability to find food? A strength of biologically based intelligence is that it performs well in novel situations by applying principles learned through experience in other contexts. Artificial intelligence (AI), on the other hand, can rapidly process huge quantities of information and thus detect...

February 14, 2024 - 2:00 pm

Singleton Auditorium (46-3002)

Alexander Borst, Max-Planck-Institute for Biological Intelligence, Martinsried, Germany

*Due to the forecast weather event for Cambridge, MA on Tuesday February 13th, this talk will be held on Wednesday February 14th at 2:00PM*

Abstract: Detecting the direction of image motion is important for visual navigation, predator avoidance and prey capture, and thus essential for the survival...

Abstract: Detecting the direction of image motion is important for visual navigation, predator avoidance and prey capture, and thus essential for the survival...

February 6, 2024 - 4:00 pm

Singleton Auditorium (46-3002)

Yael Niv, Princeton University

Abstract: No two events are alike. But still, we learn, which means that we implicitly decide what events are similar enough that experience with one can inform us about what to do in another. Starting from early work by Sam Gershman, we have suggested that this relies on parsing of incoming...

January 30, 2024 - 1:45 pm

More than 80 students and faculty from a dozen collaborating institutions became immersed at the intersection of computation and life sciences and forged new ties to MIT and each other.

David Orenstein | The Picower Institute for Learning and Memory

Starting on New Year’s Day, when many people were still clinging to holiday revelry, scores of students and faculty members from about a dozen partner universities instead flipped open their laptops...

January 29, 2024 - 10:15 am

Joel Oppenheim was a wise and generous man. We were fortunate to work with him when he joined the CBMM External Advisory Committee (EAC) at its inception in 2014, and got to know him well because he served as an advisor for our diversity and outreach programs. Joel was dedicated to helping others. Though he was already a senior figure in the field and not always in good health, he attended every EAC meeting, always giving helpful feedback and...

January 18, 2024 - 9:45 am

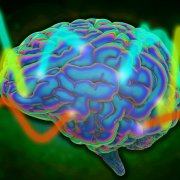

Across mammalian species, brain waves are slower in deep cortical layers, while superficial layers generate faster rhythms.

Anne Trafton | MIT News

Paper: “A ubiquitous spectrolaminar motif of local field potential power across the primate cortex”

Throughout the brain’s cortex, neurons are arranged in six distinctive layers, which can be readily seen with a microscope. A team of MIT and Vanderbilt University neuroscientists has now found that...

January 12, 2024 - 12:00 pm

[translated by Google from Italian]

Mario Paternostro and Franco Manzitti interview Tomaso Poggio, the Genoese who changed the world

Tomaso Poggio, Genoese, a professor at MIT in Boston, has been considered one of the inventors of artificial intelligence for many years.

He is in Genoa in these days, to present his book “Cervelli, mentis, algorithms” written with Marco Magrini, yesterday he held a crowded conference-lection to scientific readings...

December 15, 2023 - 12:15 pm

“Minimum viewing time” benchmark gauges image recognition complexity for AI systems by measuring the time needed for accurate human identification.

Rachel Gordon | MIT CSAIL

Imagine you are scrolling through the photos on your phone and you come across an image that at first you can’t recognize. It looks like maybe something fuzzy on the couch; could it be a pillow or a coat? After a couple of seconds it clicks — of course! That ball of fluff is...