Research Meeting: Module 3

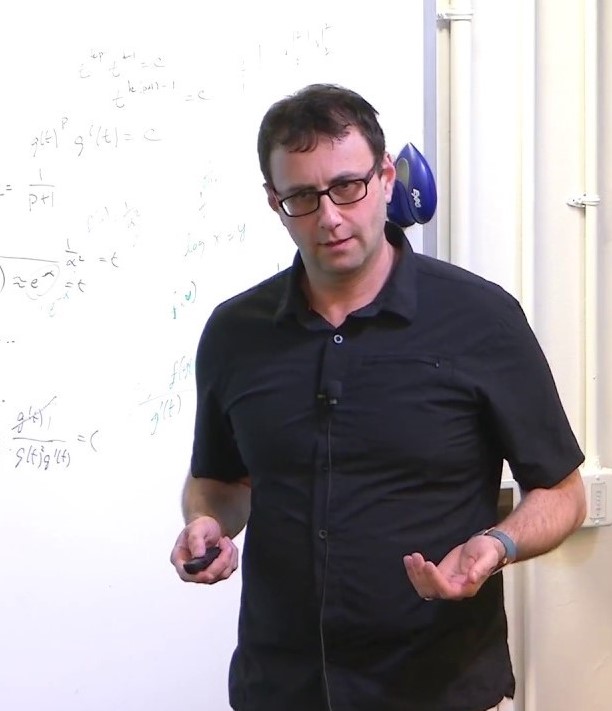

Organizer: Hector Penagos Frederico Azevedo Organizer Email: cbmm-contact@mit.eduUsing task-optimized neural networks to understand why brains have specialized processing for faces

Previous research has identified multiple functionally specialized regions of the human visual cortex and has started to characterize the precise function of these regions. But why do brains have functional specialization in the first place, and why do we have the particular specializations we do (e.g., for faces or scenes, but apparently not for food or cars)? Here, we address these questions using the well-studied case of face selectivity. Specifically, we used deep convolutional neural networks (CNNs) to test whether face-specific regions are segregated from object cortex in the primate visual system because the optimal feature spaces for face and object perception differ from each other.

We trained two separate CNNs with the AlexNet architecture to categorize either faces or objects. The face-trained CNN performed worse on object categorization than the object-trained CNN and vice versa, demonstrating that the learned features differ for the two tasks. To determine whether a CNN could learn a common feature space, we trained CNNs on both tasks with a branched architecture, varying the number of layers that were shared across tasks (Kell et al., 2018). The fully-shared network performed worse than the separate CNNs suggesting a cost for sharing both tasks in one system. However, like in the primate visual system, early layers could be shared without impairing performance.Do these results generalize to architectures with larger capacity? We trained three networks with a VGG16 architecture: one on faces, one on objects, and one on both. In contrast to the AlexNet results, the dual-task CNN performed as well as the separate networks. To test whether this network discovered covert task segregation, we performed lesion experiments and found that lesioning face-specific features selectively impaired face performance. This result suggests that functional specialization for faces emerges spontaneously in networks optimized for face and object tasks.

![Embedded thumbnail for Brain-Like Object Recognition with High-Performing Shallow Recurrent ANNs [video]](https://cbmm.mit.edu/sites/default/files/styles/youtube_thumbnail_220w/public/youtube/IqkOZhfGEYs.jpg?itok=42RJOJiM)